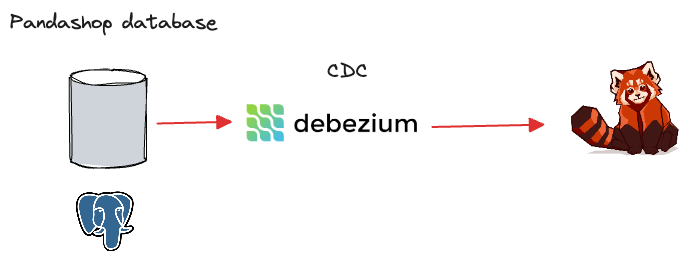

Set Up Postgres CDC with Debezium and Redpanda

This example demonstrates using Debezium to capture the changes made to Postgres in real time and stream them to Redpanda.

This ready-to-run docker-compose setup contains the following containers:

-

postgrescontainer with thepandashopdatabase, containing a single table,orders -

debeziumcontainer capturing changes made to theorderstable in real time. -

redpandacontainer to ingest change data streams produced bydebezium

For more information about pandashop schema, see the /data/postgres_bootstrap.sql file.

Prerequisites

You must have Docker and Docker Compose installed on your host machine.

This lab is intended for Linux and macOS users. If you are using Windows, you must use the Windows Subsystem for Linux (WSL) to run the commands in this lab.

Run the lab

-

Clone this repository:

git clone https://github.com/redpanda-data/redpanda-labs.git -

Change into the

docker-compose/cdc/postgres-json/directory:cd redpanda-labs/docker-compose/cdc/postgres-json -

Set the

REDPANDA_VERSIONenvironment variable to the version of Redpanda that you want to run. For all available versions, see the GitHub releases.For example:

export REDPANDA_VERSION=v26.1.9 -

Run the following in the directory where you saved the Docker Compose file:

docker compose up -dWhen the

postgrescontainer starts, the/data/postgres_bootstrap.sqlfile creates thepandashopdatabase and theorderstable, followed by seeding the ` orders` table with a few records. -

Log into Postgres:

docker compose exec postgres psql -U postgresuser -d pandashop -

Check the content inside the

orderstable:select * from orders;This is the source table.

-

While Debezium is up and running, create a source connector configuration to extract change data feeds from Postgres:

docker compose exec debezium curl -H 'Content-Type: application/json' debezium:8083/connectors --data ' { "name": "postgres-connector", "config": { "connector.class": "io.debezium.connector.postgresql.PostgresConnector", "plugin.name": "pgoutput", "database.hostname": "postgres", "database.port": "5432", "database.user": "postgresuser", "database.password": "postgrespw", "database.dbname" : "pandashop", "database.server.name": "postgres", "table.include.list": "public.orders", "topic.prefix" : "dbz" } }'Notice the

database.*configurations specifying the connectivity details topostgrescontainer. Wait a minute or two until the connector gets deployed inside Debezium and creates the initial snapshot of change log topics in Redpanda. -

Check the list of change log topics in Redpanda:

docker compose exec redpanda rpk topic listThe output should contain two topics with the prefix

dbz.*specified in the connector configuration. The topicdbz.public.ordersholds the initial snapshot of change log events streamed fromorderstable.NAME PARTITIONS REPLICAS connect-status 5 1 connect_configs 1 1 connect_offsets 25 1 dbz.public.orders 1 1

-

Monitor for change events by consuming the

dbz.public.orderstopic:docker compose exec redpanda rpk topic consume dbz.public.orders -

While the consumer is running, open another terminal to insert a record to the

orderstable:export REDPANDA_VERSION=v26.1.9 docker compose exec postgres psql -U postgresuser -d pandashop -

Insert the following record:

INSERT INTO orders (customer_id, total) values (5, 500);

This will trigger a change event in Debezium, immediately publishing it to dbz.public.orders Redpanda topic, causing the consumer to display a new event in the console. That proves the end to end functionality of your CDC pipeline.

Clean up

To shut down and delete the containers along with all your cluster data:

docker compose down -vNext steps

Now that you have change log events ingested into Redpanda. You process change log events to enable use cases such as:

-

Database replication

-

Stream processing applications

-

Streaming ETL pipelines

-

Update caches

-

Event-driven Microservices